Tag: grimmelmann

Evil Is As Evil Does

by chris on Aug.09, 2010, under general

James Grimmelmann on the Google/Verizon “deal” that has been alleged to oppose net neutrality, support net neutrality, or be totally ambivalent towards it.

Grimmelmann is totally correct in the main thrust of his post:

I would like to say, though, that this secretly negotiated private “deal†is a terrible, terrible blunder on Google’s part, considered purely from the perspective of its own self-interest. Google has enjoyed a generally good relationship with many activists and civil society groups who want to protect individual freedoms online. Even if what Google is now proposing is good policy, the backroom nature of the process sends an unmistakable message to Google’s erstwhile allies: we’re with you only as long as it’s convenient for us.

and other good stuff besides.

That said it bears noting that while Grimmelmann begins his post with:

The Verizon-Google Net neutrality deal is now public. In brief: neutrality for Plain Old Internet, transparency but not neutrality for wireless, and nothing for “Additional Online Services†unless they “threaten the availability†of POI.

it may actually be a bit more complicated than that, as the actual framework appears to leave quite a bit of wiggle room.

Non-Discrimination Requirement: In providing broadband Internet access service, a provider would be prohibited from engaging in undue discrimination against any lawful Internet content, application, or service in a manner that causes meaningful harm to competition or to users. Prioritization of Internet traffic would be presumed inconsistent with the non-discrimination standard, but the presumption could be rebutted.

…

Network Management: Broadband Internet access service providers are permitted to engage in reasonable network management. Reasonable network management includes any technically sound practice: to reduce or mitigate the effects of congestion on its network; to ensure network security or integrity; to address traffic that is unwanted by or harmful to users, the provider’s network, or the Internet; to ensure service quality to a subscriber; to provide services or capabilities consistent with a consumer’s choices; that is consistent with the technical requirements, standards, or best practices adopted by an independent, widely-recognized Internet community governance initiative or standard-setting organization; to prioritize general classes or types of Internet traffic, based on latency; or otherwise to manage the daily operation of its network.

…

Additional Online Services: A provider that offers a broadband Internet access service complying with the above principles could offer any other additional or differentiated services. Such other services would have to be distinguishable in scope and purpose from broadband Internet access service, but could make use of or access Internet content, applications or services and could include traffic prioritization.

I would never seek to second-guess Grimmelmann’s read of the law here, and if he thinks this is still a fundamental neutrality policy, I believe him. But though this framework isn’t quite a tiered Internet, it isn’t exactly a flat neutrality policy either, at least of the kind, say, Tim Wu would advocate.

Bigwig Burned By Buzz

by chris on Apr.05, 2010, under general

(Apologies for the alliteration. The sad truth is that I once attended a session at the NEYWC run by a senior Sports Illustrated editor. When he reviewed my journalism samples he told me that, whatever other weaknesses my style might have, it was refreshing free of the tropes that had haunted his early writing, mainly alliteration, bad puns, and catchy clauses jammed into sentences where they didn’t belong. He then gave me a sample of that bad writing so as to not emulate it. And I’ve been writing like that ever since).

(Anyway,)

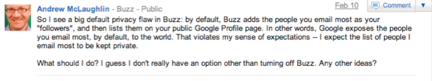

Via James Grimmelmann, the tragic story of yet another individual who found himself tripped up by the confusing design of Google Buzz.

Except, in this case, the individual was Andrew McLaughlin, i.e. the Deputy Head of Internet Policy for the White House and former Head of Global Public Policy for Google itself.

Maybe Mr. McLaughlin needs to read Grimmelmann’s Privacy as Product Safety so he can get to regulating his former employer!

edit: immediately after hitting submit I saw this blog post from Google Public Policy about the changes they made to Buzz. Good. But not good enough.

Grimmelmann and Privacy as Product Safety

by chris on Mar.03, 2010, under general

I’ve been pretty haggard with work lately, so I’m a bit late on this, but James Grimmelmann has written a great paper called “Privacy as Product Safety”, to be published in the Widener Law Journal. It’s an adaptation of his “Myths of Privacy on Facebook”, and it’s quite good.

In his “Saving Facebook”, Grimmelmann explained the “social dynamics” of privacy problems on Facebook. He canvassed the social science literature to explain how and why people used Facebook, and what their behavior could tell us about proper regulation and privacy protections.

But in this article, he’s honing in on what I’ll call the “design dynamics” that he explored in his first article – that is, how the design of Facebook (or other such services) relates to its privacy problems. This idea isn’t new – he calls them “privacy lurches” in Saving Facebook, and they’re somewhat the focus of my “Losing Face” – but what is really great about this article is how Grimmelmann maps product liability law onto the scaffold of social network sites.

For example, on Google Buzz:

“Buzz as a whole is a powerful, possibly revolutionary product—but it also launched with a serious design defect. Just as an otherwise-useful buzzsaw is still unreasonably dangerous to life and limb if it sports a flimsy handle, the auto-add feature made the otherwise-useful Buzz unreasonably dangerous to privacy.”

In “Losing Face”, I mostly gave up on law as a tool to fix the defective designs of social network sites. I’m interested, and excited, by Grimmelmann’s effort to adapt liability law to achieve an admirable end.

Losing Face: An Environmental Analysis of Privacy on Facebook

by chris on Jan.06, 2010, under papers, rfc

Yesterday, I submitted Losing Face: An Environmental Analysis of Privacy on Facebook to a variety of science and technology law reviews. Its abstract is as follows:

This Article contributes to the ongoing conversation about privacy on social network sites. Adopting Facebook as its primary example, it reviews behavioral data and case studies of privacy problems in an attempt to understand user experiences. The Article fills a crucial gap in the literature by conducting the first extensive analysis of the informational and decisional environment of Facebook. Privacy and the environment are inextricably linked: the practice of the former depends upon the dynamics and heuristics of the latter.

The Article argues that there is an environmental element to the Facebook privacy problem. Data flow differently on Facebook than in the physical world, and the architectural heuristics of privacy are absent or misleading. This counterintuitive informational environment waylays privacy practices, opens a gulf between expectation and outcome, causes a crisis in self-presentation, and facilitates what Professor Helen Nissenbaum calls a loss of contextual integrity.

The Article explores possible interventions. It explains how regulatory solutions and market forces are themselves hindered by the the deficient privacy environment of Facebook and can’t solve all of its problems. This Article recommends renovating the design of Facebook to privilege privacy practices and proposes specific interventions drawn from the computer science and behavioral economics literature. It concludes with a message of cautious optimism for the emerging coalition of engineers, academics, and practitioners who care about privacy on networked publics.

The Article is a heavily revised adaptation of the thesis I conducted for Ethan Katsh and Alan Gaitenby at the University of Massachusetts, Amherst. If you’ve read my thesis (entitled “Saving Face”; title changed to avoid confusion with James Grimmelmann’s excellent Saving Facebook, recently published in the Iowa Law Review), then you’re familiar with the broad contours of the idea.

Losing Face, however, has been both greatly refined in its argumentation and noticeably reworked in its format (bah Bluebook) over the last year or so. I received invaluable feedback and assistance over the last from many people during this drafting process, including Helen Nissenbaum, researchers and interns at the Berkman Center for Internet and Society, but most indispensably James Grimmelmann, who helped me navigate the convoluted and mystified norms and logistics of the publication process.

I’ve posted a copy of the Article here and on BePress for further comment while it wends its merry way through the editorial process. This is a draft only, and should not be used for citation. I’ve endeavored to make all references as clear as possible, though some are not as clear as they will be in the final version because I haven’t nailed down all the infras and supras yet. If you have any questions, comments, or concerns about Losing Face, please feel free to drop a comment here or shoot me an email.

Things Are Looking Grimm

by chris on Jun.22, 2009, under general, papers

Sorry, I couldn’t resist.

Professor James Grimmelmann of New York Law School was kind enough to give my Facebook working paper a shoutout on his blog. In case you haven’t read it, his article on Facebook and the Social Dynamics of Privacy (forthcoming in the Iowa Law Review) is probably the best work done in this field thus far.

He’s a swell guy who has been very helpful orienting me in academic space and teaching me fun new words like desuetude, which is really the point of an intellectual mentor anyway.